When I visited the Mapping Festival I had the opportunity to sit down with Tom Butterworth (Bangnoise), Anton Marini (Vade) and David Lublin (Vidvox/VDMX). These guys’ presence was actually my main reason for going to the festival.

Anton and David live in NYC and see each other fairly often. Tom lives in Scotland and this is actually the third time the three of them have met in person. When I ask how they first met, the guys laugh when they realized it was through bug reports. Through the reports they gained appreciation for each other’s coding skills and ended up collaborating.

Many of us are familiar with the trio’s work, but for those who are not: Tom and Anton are the fathers of Syphon, a technology for sharing video in realtime between apps on Macs, as well as the v002 plugins for Quartz Composer. David works at Vidvox with the VJ software VDMX, and Tom and Vidvox made the Hap video codec that now works on both OS X and Windows, as well as several other plugins, apps and frameworks that they’ve shared with the public as open source over the years.

The trio communicates through instant messaging, email and GitHub comments. They have tried Skype a couple of times but they say they don’t really feel the need for seeing and hearing each other.

Syphon

Is the traditional hardware video mixer now redundant? Are people already mixing from computer to computer over the network?

Tom: There are a few solutions for Syphon over network. There are a few different Max patches and a couple of apps. None of them are ideal for large frames and latency is sometimes a problem. I’ve tried my own solution and it isn’t as good as it needs to be.

Anton: Gigabit ethernet is not really fast enough to pass an uncompressed HD frame. We’ve thought long and hard about how we could possibly do this. With Thunderbolt 2 there is more bandwidth but still only 10 gig allocated for the network.

Tom: We continue thinking about this.

I always thought that there was a max size to the Syphon texture, but I heard that’s wrong: it’s decided by the graphics card?

David: The application generating the texture may have a limit.

Tom: Yes, but the limit of Syphon is your graphic cards’s texture dimension limit.

Anton: It’s 8k or 16k per texture.

David: It’s more than you are actually going to be able to do.

Tom: The program you’re using is probably unable to render a canvas that size, but Syphon is ready for it.

Are you still developing Syphon?

Tom: Yes, our near term to-dos are OpenGL 3 & 4 support.

One thing we don’t have is a presence in Apple’s App Store but that one is going to be a pain. Apple don’t like one app talking to another app for security reasons.

Anton: Float texture support. Right now Syphon really only deals with 8 bit per channel textures and we want to support something with higher bit-depth to get higher fidelity. A use case for that would be Kinect depth maps. If you have an image that is 32 or 16 bit floating point and you want to transmit it to another app it clips and you will loose depth of precision.

All of these are totally solvable. It’s just about finding the time to do it, and making sure vendors update their Syphon version, and figuring out the fallback if apps are using different Syphon versions.

What about the Syphon-like attempts on Windows?

Tom: We’re interested to see where those things go. Wyphon was a bit of a focused solution to a single project. Spout seems to have more of an idea about what they are doing but it’s certainly a very young project.

Anton: We’re really glad they took their time to do it.

Is Syphon to Spout something we could see in the future?

Tom: We have discussed that a little bit with them and it would be good to continue that discussion.

Anton: It’s contingent on that bandwidth over network thing we talked about earlier.

What about Syphon for Unreal?

Anton: It’s totally doable. If it uses OpenGL and has a plug-in system it should be possible. But I haven’t asked anybody to make it. If there’s a need, somebody will make it.

David: I think someone makes a script that you can run and tell any OpenGL enabled program to insert a Syphon server into it.

Anton: Oh, Mach Inject. It’s a technology where you have to have root user privileges. You launch your program and it overrides the system calls and inserts a Syphon server into any application that uses OpenGL. Supposedly it works but I’m a little paranoid about it. It’s not the ideal solution but a beautiful hack.

If you want to use Syphon to broadcast a video stream – are there any good solutions?

Tom: CamTwist is one way to do it, it’s the normal solution. (CamTwist is a free app that turns the input into a camera feed that video streaming solutions like Bambuser and Ustream can use).

Anton: Jitter has a way to talk to IP cameras and RTSP streams so you can do that with Max MSP Jitter and go straight to a Syphon server.

Tom: I have an app I wrote for connecting a Canon camera to Syphon and someone contacted me on Twitter the other day and wanted some help with that – it was a webcam girl, she wanted the best possible image for her business.

Speaking of that, what is the weirdest Syphon workflow you’ve seen so far?

Tom: Our favorite at the moment is Alejandro Crawford who is touring with MGMT. He flies a quad copter over the crowd with a camera attached. That comes into VDMX and then goes over Syphon to Jitter and then into Unity and gets mapped onto objects and then that goes back into VDMX and creates a feedback loop – and it also goes out to Syphon Recorder.

Quartz Composer

There has been a lot of talk about the life and death of Quartz Composer. Has the popularity of Origami brought new life into Quartz Composer?

Tom:No, it’s a dying gasp.

Anton: From what I understand from talking to people at Apple, there is only one person who is on triage on quartz composer. They don’t have active developers, they’re only doing bug reports and very rare fixes. Everybody on the Quartz Composer team that was doing active development has been moved either to Core Graphics or OpenGL. Just because Facebook uses QC for prototyping, Apple doesn’t give a shit.

Math & programming

People tend to think that you need to be good at math to get into programming. Are you guys good at math?

Tom: I studied philosophy and literature and I’m very bad at math. I have to read, write down, check, double check, quadruple check and then send it to someone else who tells me it doesn’t work.

David: I studied math in high school and college. I can figure things out but I’m always slamming my head against the wall and double and quadruple checking myself. But when I do have to do it, it’s kind of a pleasure getting into the rhythm.

Anton: When I stared out I was really, really bad at math. I think programming and working with live visuals and understanding how to make things has demystified a lot of it. It has exposed some common techniques that makes me understand and appreciate math. GLSL has given me a better understanding of linear algebra since I had to snatch my head around it a million times.

Anton and David agrees that they would have spent way more attention to math in school if they would have had interesting examples, like GLSL shaders, to apply it to.

David Lublin

The new plugin system, ISF, that you recently introduced in VDMX, was that created because Quartz Composer is singing it’s last verse and the problems with Core Image’s memory leaks?

It was something that people have been asking about for a long time but the problem with QC and Core Image made us push it further up on our agenda.

Now when it’s picking up and people are starting to really use the effects we are going to make a web site for people to share them. Someone has made an OpenFrameworks add-on so you can use them in oF too. That will help it to make it more of a standard.

We have made ISF versions of all our Core Image based plug-ins plus we added a few other ones.

Have you made any tutorials for how to make ISF plugins yet?

There are two tutorials but they are very introductory.

Have you thought about integrating Processing with VDMX?

I didn’t think it was easily possible. If we had somebody who knew Processing very well, we would be really interested in supporting Processing natively. We want to support as much stuff as we can. In fact, one of the discussions we’ve been having is how people doing Open Frameworks development can make their stuff into a plugin without jumping through too many hoops.

How about loading 3D models straight in to VDMX?

Several people has asked me about it. 3D is not something we have done a lot of in the past so we’re trying to beef up our knowledge. Anybody who wants to work with 3D models in VDMX should send us emails saying how they think it should work.

One of the problems we’re dealing with is that VDMX uses an orthographic projection because it’s just video. But when you’re dealing with a 3D object you deal with different kinds of perspectives in OpenGL so we don’t know if we need to let people set that for what they are working on or if Orthographic is fine, since we’re going to video anyway eventually.

And now when we have ISF plugins, we have the ability to write vertex shaders. Should we make it so that as part of the video source, you apply a vertex shader? How do we introduce effects at that point? Do we have 3D effects? What happens if we apply a 3D effect on a 2D layer? If you have a 3D layer, how should that work with other 3D layers? Do we rasterize it to 2D or do we let a 3D object travel around? That would mean a huge change to the rendering engine.

Edge blending – is that something that should be in the app?

Yes, it’s something we have talked about and it might happen. We have talked about it in relation to if we should add more mapping features, but there are so many projection mapping tools out there already. Edge blending is a special case that we might want to do anyway.

How about Vuo support? I’ve heard from the Vuo team that they are working with some of the VJ app vendors to make it possible to use Vuo in a similar way as Quartz Composer is used today.

They have development they need to do. And VDMX is currently still stuck in 32 bit mode while AVFoundation is getting it’s stuff sorted out. If it comes together we will be happy to support it.

Anything else new in VDMX?

Over the last few months we have slowed down on our feature releases because we have been revising a lot of our code so that we can make the code more reusable and make large-sweeping changes throughout the app. It’s nice to have some time to improve the code and make the backend better even though the users doesn’t see it and appreciate it that much.

But yes, we have added a bunch of new effects and HID (game controller inputs) for the next release.

Name some artists that has inspired you

The Cycling 74 people were a huge inspiration, Max MSP made me love coding visual stuff.

I grew up with MTV, so Chris Cunningham, Spike Jonze and Michel Gondry.

Tom Butterworth

How do you describe your job?

Programming for video, video for performance ideally.

So living the the dream?

Maybe, sort of, trying to make the dream realizable.

What’s the dirty work you need to do to make it realizable?

Less interesting programming. It’s still to do with video but some of it is less fun.

I am quite lucky that I sometimes do get paid to work on open source projects which I really enjoy. Vidvox in particular are really good about supporting open source.

How about crowd funding? I know you know you tried it for the dance project “Fiend”.

I’m not a fan of crowd funding for arts projects. It’s basically begging from your friends. We had Arts Council funding from the U.K. government and it was a way to supplement that. We just asked for a very small amount.

I’ve seen other people fail with crowd funding of software

That’s different, that kind of crowd funding might work because in the end the backers get a product. I don’t think it’s the ideal way to fund software development, I’d rather just get paid to do it.

Could there be some kind of app store economy to it where you can pay a small sum and receive an app?

I don’t think that’s interesting. For a lot of things it’s important they’re open source. Nobody benefits if people have to remake basic tools all the time. It’s much more interesting if you can just say: here’s a tool that is useful and then everybody could just use that and move on together and think of what the next development is to make it better. Things like Syphon and the Hap codec, their success depends on their take-up.

We get some generous donations from companies using Syphon and Hap but we don’t really get donations from individuals – though there are some exceptions that we are really grateful for.

How did the Fiend project go?

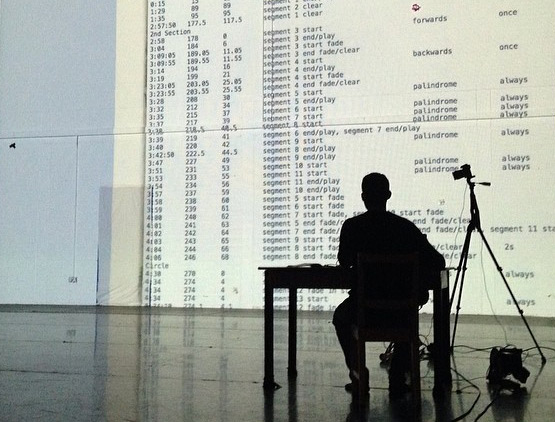

We have only performed it once but we got really good feedback. We have a couple more dates coming up. It has been really fun to do and it has been totally new for me – I normally sit at my desk and write code and send it to someone a few weeks later. So it’s quite different to sit with a dancer in a studio who wants you to make a change in 10 seconds. In the piece, I have a table and chair on the stage with my back to the audience. I manipulate all the video live. I think that is as close to being a performer as I will ever get.

Now a user question: Will the Datamosh plugin for Quartz Composer ever be updated to do full HD?

It does full HD. I guess the user’s computer is not fast enough. I’ve used it in 1080 and I’ve rendered out huge canvases in Quartz Crystal, even if that’s not quite the same. But yes, I should upgrade it. It would be good to try a version with 64-bit support using AVFoundation.

And the Video Delay plugin – if you want to use it live to create a slit scan effect, is there a way to make it respond faster?

I used it for a section in my latest dance piece. While developing I had a 2010 Macbook Pro and just before we did our first performance I got a new laptop and that made a lot of difference. So once again, buy a faster computer.

The plugin is limited because you can change the delay map while running the composition, so it has to save every full frame of video to create the delay. All of that happens in main memory because it is too much to keep on the GPU, so then I have to assemble each frame on the CPU and that’s a laborious process.

If you want to use it for slit scan, try the ISF plugin David did for VDMX. Because the map is never going to change it doesn’t need to store every frame, so it’s a very fast operation.

Name some artists that has inspired you.

Toby (Spark) Harris’ nonlinear cinema

Stan Brackhage’s silent hand painted films.

Anton Marini

Where do you work?

Right now I’m a software developer for a startup called Ultravisual, I do that full time. It’s a multimedia social network. Video, Photos, Instagram-like filters, editing and stop motion. You can curate content with multiple people together. So you and I could for instance have a Swedish VJ Union jam where we could both could put images and video and repost, remix, that sort of stuff.

Tell us about v002, your performance app

It’s been a hobby, something I’ve been working on a lot. It started as an open source project, as a very simple tool. But it ended up very cluttered because I built it very quickly and it didn’t have a good philosophy behind it. Then I did a residency at Eyebeam and redesigned quite a bit of the app and it became node-based and elegant.

Tom has been really helpful with it, he has been doing a lot of work implementing it. My role is that I work on the design pipeline and interface ideas. When Tom and I met in New York, he was staying with me and we spent a few days talking about this app and how we wanted it to work and different ideas we had for it.

What I would ideally like to do is to walk into a show with nothing prepared. Imagine: It’s like you’re a musician and you just improvise, so you would need to be able to play the software like an instrument. And what that doesn’t mean is that you have a preconfigured setup. It means that you’re able to make an entire composition in realtime and perform it live.

So everything about the application is about eliminating the latency between when you want to do something and when it gets done. I get very frustrated at times with certain tools where you have to do so much work ahead of time to set it up and to me that defeats the whole concept of realtime. If I have to do everything beforehand and then I hit a button and it plays it and then I hit a button that automates it, then why not make a movie and play a movie? If it’s realtime – then let’s make it fucking realtime!

So the idea is that the entire spectrum of the way the software is designed, from the interface to performing, is improvisational. Meaning the whole thing is an instrument that is meant to be elegant and simple and that means that you need to remove a lot of stuff. You start with a blank canvas: when you create a movie player, you get a movie player with the interface hidden. If you want the movie player interface, you can bring it out. You can record gestures into the interface and when you stop recording, it plays it back. The idea is that you can modulate and effect your performance. You can slow things down, you can speed it up and you could quantize. But you don’t quantize the image, you’re quantizing the performance. It allows you to very quickly move from one mood to another. It’s about being very flexible and very improvisational, that’s the goal of the app. The whole thing is a design experiment, it’s not meant to replace VDMX or Modul8, but what I think it would do is to swing the pendulum far in the other direction.

The app is supposed to be out but it never got finished. I don’t know if I ever will release it. But if I do it will be open source, that’s the only thing that makes sense.

People who follow you in social media can hear you moan a lot over OS X – what’s stopping you from switching to Linux?

Because it’s even more of a pain in the ass! I just spent a bunch of time trying to develop some stuff on the Raspberry Pi and I have never been so frustrated in my life. When I updated the Raspbian operating system, it installed the experimental version of the OpenGL driver on it’s own, and suddenly my application ran at half the frame rate.

Wasn’t there a flag you could set in the installation command?

No. I spoke to the people responsible and they said “yeah, we probably shouldn’t have done that”. So it’s because it’s a bunch of people that are hacking on it, I understand it.

Honestly, I’ve been thinking of going to Windows. Because there’s really great apps on Windows like TouchDesigner that I’m really interested in – it looks phenomenal. And a lot of my friends are using it and they are making really good work with it. It’s very similar to Max MSP, I love Max, and Apple is clearly focussing on iOS. They’re making iPhones and that’s all they care about – fuck ‘em.

If you could sum up all your OSX frustration in one word – what would it be?

“Seriously?!”

Name some artists that has inspired you.

Memo Atken, he is amazing. I like the work that he does and his general philosophy and that he shares his work.

Jasch, a Max MSP user who makes phenomenal generative stuff. That’s the stuff I was chasing, I wanted to get to that level.

Summing it all up

After tracking these guys on-line for years, it was great to finally meet them.

Tom is one of the most humble people I’ve ever met. He is not even bothered when other people get credit for things he has done. I never thought about that fact that Tom hasn’t been a performer until his recent “Fiend” piece. Before that he has just been solving problems for other people.

Anton is way more nuanced and verbal than you might expect from following his social media feed. Even if he curses Apple on a daily basis he knows that the VJ scene is such a marginal target group that all the defects that we suffer from will probably never get fixed.

David is an intense guy that seems to be always on. He was in workshop mode the entire festival. Whenever someone had a question a laptop magically appeared and suddenly you saw a group of people huddling over the screen and you heard him explaining advanced VDMX concept but making it sound as easy as iMovie.

I will probably never be able sit down with all three again but I hope to catch them all individually in a nonlinear future.

Read the report from the 2014 edition of the Mapping festival.

“then let’s make it fucking realtime!” this makes me happy